Our hopes and fears need leavening with common sense

What are we to make of AI?

September 2nd was the 1,000th day since the release of ChatGPT, the easily accessible artificial-intelligence tool launched by OpenAI. More AI tools have proliferated as tech heavyweights Google, Microsoft and Meta have released their own consumer models.

Three years may seem like a short time but, as one writer noted, it means that freshman students entering college this month will have spent most of their high-school years with access to some version of this tool. A Pew survey of U.S. students concludes that 26 per cent use AI regularly, and that seems low.

Diligent scholars can use AI for research or as an aid in essay-writing. But vast numbers will use it to, yes, write their essays entirely. And if essay-writing becomes just a cat-and-mouse game between teachers and students, much of the education edifice would collapse.

As a result, many professors are returning to in-class testing and essay-writing, oral exams and handwritten “blue book” examinations.

Education is not the only field being upended by AI. Accountants, lawyers, engineers, and even doctors — every profession involving repetitive calculations or programmable rules — may see large swathes of their workload reconfigured by AI.

As for writers and editors — hey, do you really know who wrote this article?

Real information scarce

We are bombarded these days with news about AI. Some media reports sound urgent, frightened, even hysterical. Artificial intelligence is “a subject of nonstop hype and anxiety”, concludes the monthly Commentary.

It adds more soberly that “today, AI systems are involved in driving cars and trucks, running factories, diagnosing patients, and other high-stakes enterprises. And for the most part, they do these things exceptionally well”.

On the other hand, the doomsday narrative remains strong. It is backed by very credible actors.

Canadian AI pioneer and Nobel Prize for Physics winner Geoffrey Hinton wants governments to be cautious and to impose regulations. Hinton cites AI misinformation, its threat of job losses and more advanced cyberattacks. There is the ominous possibility of AI eventually outsmarting humanity.

“In early 2023 I realized that the digital intelligences we’d already created, although they weren’t as smart as us yet, might actually be a much better form of intelligence than biological intelligence,” Hinton told the Globe and Mail. There is no instance of more intelligent beings ruled by less intelligent beings. How will humans fare?

The “singularity” is a term created to describe the exact point at which AI will surpass human intelligence. Today, 2029 is a common forecast. — Meaning, tomorrow!

Frankenstein, a Gothic novel written by Mary Shelley in 1818, got the ball rolling about man-made threats to humanity. But artists have often drawn up nightmare scenarios.

In the film 2001, A Space Odyssey (1968), the computer HAL takes control of a huge spacecraft and dictates terms to its beleaguered astronaut. Director Stanley Kubrick played on the widespread 1960s fear of big computer companies like IBM “taking over”. (In the alphabet, H-A-L is one letter short of I-B-M, get it?).

Back in 1968, IBM was the most powerful corporation in the United States, with a market value of $260 billion in today’s terms. Today, IBM ranks just 68th among Fortune 500 companies. So, its intimidating power has been deflated to a great degree.

That may be a precursor to diminishing fears about AI. After all, we accepted pocket calculators being used during math and science exams, after much alarmist talk about them leading to dumbed-down degrees.

Similarly, the question today remains open: Is AI basically like a vacuum, just sweeping up Internet information? Or a “microwave” just reheating existing sources? Will it ever be able to use what the great philosopher Kant called “synthetic” reason, the ability to produce original thoughts?

AI is anchored in large language models (LLMs), which absorb text on the Internet, then combine and regurgitate pieces of it based on the best probabilities of the correct answer. It has no understanding of concepts per se.

There is reportedly no evidence that LLM models are yet approaching artificial general intelligence (AGI), which could match or exceed human cognitive abilities. AGI could generalize knowledge and solve new problems without needing task-specific programming.

Practical value tested

Road tests suggest that today’s AI has more limited abilities.

For example, the business section of the Globe recently assembled a panel of financial advisers to assess how good AI-generated advice really was about personal finance – spending, saving, credit, investments.

Their verdict? The panel gave mixed grades for ChatGPT’s answers to basic financial questions. The AI tool averaged just 7 to 7.5 out of 10, getting much basic info right but in other areas its judgement was heavily criticized.

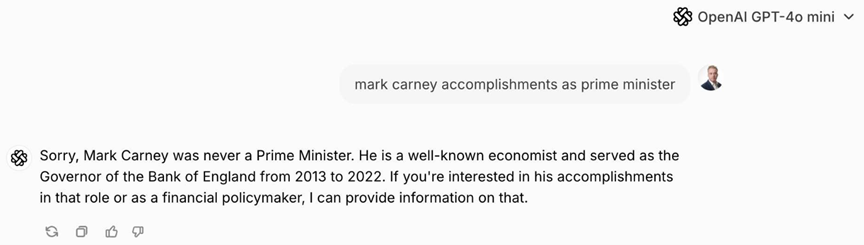

Goofs by ChatGPT among others (above) have called into question their dependability.

Such tests are fun to do. Recently, I asked ChatGPT to review and advise me about something I know well – my own writing portfolio (davidwinch.website). Its assessment was revealing but also spit out flatly wrong story titles, somehow imagined from themes I covered.

Meanwhile, Meta AI helpfully suggested that I should emulate flamboyant 1940s New York writer and critic A.J. Liebling, while MS Copilot added some Townships content with advice to model my writing on …. Mordecai Richler. I am sure Mordecai would have had a good chuckle at that suggestion.

Then there is the issue of today’s AI models “hallucinating”, with crazy answers. Users have groaned at AI Gemini producing wacky search results —advice that you should use glue to keep toppings on pizza, for example, that you should eat rocks every day or that bathing with a toaster will unwind your stress.

Not to shock anybody, since I often bathe with a toaster, ha, but I have no confidence in these results.

Our AI future will surely unwind in unpredictable ways. But in the meantime, we are left with one more question: Who the heck actually wrote this article?

Originally published in Townships Weekend supplement to the Sherbrooke Record, Sept 2025